Barry Chen (14-ERD-100)

Abstract

The ability to automatically detect patterns in massive sets of unlabeled data is becoming increasingly important as new, advanced types of sensors come into use. However, the biggest obstacle to applying machine learning to this and other areas of national security data science is the sheer volume of unlabeled data that must be analyzed. Deep learning is a computational approach for identifying patterns in such unlabeled data, and has already been shown to often outperform traditional systems built on hand-engineered features. The goal of this project is to develop the learning algorithms that would form the basis of massive deep-learning neural networks for effectively capturing complex spatiotemporal patterns in massive data sets, thereby helping to address nationally important problems. Our approach consists of three parts: (1) scale-up existing deep-learning algorithms to run on high-performance computing platforms, (2) develop new deep-learning algorithms to model high-dimensional time-varying signals, and (3) validate the new deep-learning algorithms by applying them to the three complex data problems of classifying audio and video content, detecting anomalies in wide-area aerial video, and modeling network behavior.

If successful, we will develop the world's largest deep-learning network, including next-generation deep-learning architectures and algorithms that effectively identify complex spatiotemporal patterns in massive, unlabeled data sets, thus establishing LLNL as a leader in machine learning on high-performance computing platforms. We will also demonstrate statistically significant improvements in classification rates on three mission-relevant problems and develop the expertise for effectively using deep-learning methods in a wide range of data-science applications.

Mission Relevance

By advancing the specific applications of classifying audio and video content, detecting anomalies in wide-area surveillance video, and modeling computer network behavior, our research is relevant to the Laboratory's strategic focus area in cyber security, space, and intelligence. This project also supports the Laboratory's national security mission by developing technologies that are broadly applicable to other mission-relevant applications, including general threat detection, Livermore's National Ignition Facility system monitoring and prediction, and the validation of advanced manufacturing parts. Overall, the project is well aligned with LLNL's core competency in high-performance computing, simulation, and data science.

FY15 Accomplishments and Results

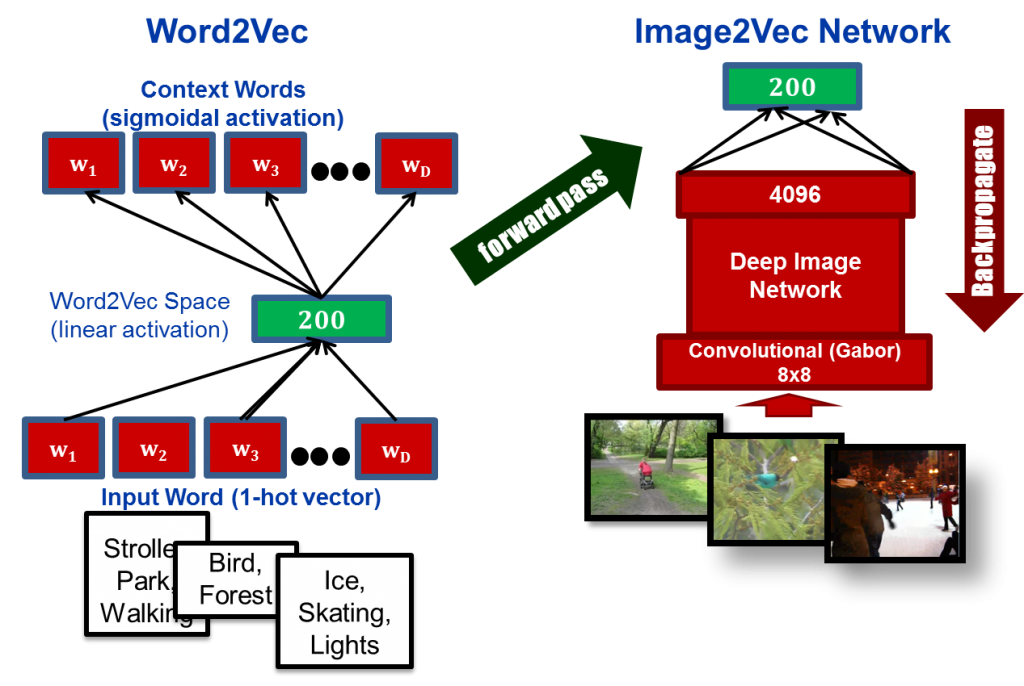

In FY15 we (1) developed a dynamic space–time receptive field network for action recognition and demonstrated performance gains relative to a static model; (2) developed a novel approach for learning text and image features, jointly combining deeply learned image features and text features (see figure); (3) invented the compressive autoencoder, a new class of autoencoders (artificial neural networks used for learning efficient coding) that learn to predict future observations to better model temporal patterns and improve prediction performance; and (4) integrated a distributed matrix library with our neural net software, enabling networks with massive, fully connected layers on central processing unit and graphics processing unit architectures.

Publications and Presentations

- Boakye, K., et al., HPC enabled massive deep learning networks for big data. ASCR Machine Learning Workshop, Rockville, MD, Jan 5–7, 2015. LLNL-ABS-662780.

- Ni, K., et al., Large-scale deep learning on the YFCC100M dataset. (2015). LLNL-CONF-661841. http://arxiv.org/abs/1502.03409

- Van Essen, B., et al., LBANN: Livermore big artificial neural network HPC toolkit. SC15 Workshop MLHPC2015: Machine Learning in HPC Environments, Austin TX, Nov. 15–20, 2015. LLNL-CONF-677443.