Alan Kaplan (16-ERD-034)

Executive Summary

We are developing analytic approaches for large-scale, multiple-dimensional, biomedical big data, specifically the relationship between neurological signals and patient behavior. The outcome of this project will extend Lawrence Livermore National Laboratory's distributed computing libraries, augment existing software infrastructure, and build additional deep-learning capabilities for graphics-processor-based computational systems.

Project Description

The Brain Research through Advancing Innovative Neurotechnologies initiative aims to revolutionize our knowledge of the human brain as well as advance treatment and prevention procedures for brain disorders. Researchers involved with this initiative work with biomedical "big data" and require algorithmic expertise, statistical methods, and high-performance computing resources to fully exploit the various datasets at scale. To help meet these requirements, we partnered with neuroscientists from the University of California, San Francisco (UCSF) in a project that lies at the intersection of neuroscience, statistics, large-scale data analytics, and machine learning. The partnership's aim is to develop novel analytic approaches for large-scale, multidimensional data acquired from patients monitored for clinical assessment. This dataset is from a large population of patients surgically implanted with intracranial sensors that record the electrical activity of the cerebral cortex. The patients are monitored 24 hours per day for weeks. The resultant data is best suited to characterize aggregate brain function and phenomena relating to longer-developing brain states. This data set specifically targets the relationship between neurological signals and patient behavior. We will use it to model relationships between brain activity and natural, uninstructed behavioral and emotional states. In addition, synchronized, high-definition video with audio will be collected to record unstructured (i.e., natural) patient behavior. By correlating neural signals with the events captured on video, we will attempt to relate brain function to observable behavior.

Using UCSF's one-of-a-kind dataset, the combined expertise of their neuroscience investigators, and Lawrence Livermore National Laboratory's statistical and machine-learning capabilities, as well as our world-class computational resources, we expect to develop novel approaches in neural signal analysis and multimodal modeling to determine the neural patterns underlying human activities observed from video. Expected results will contribute to the areas of brain-signal characterization, video analytics, and joint multimodal modeling. In brain-signal characterization, we will develop novel sparse-signal representations to isolate brain function. In video analytics, state-of-the-art algorithms in deep learning will be leveraged to extract relevant features and isolate time segments with potential signals of interest. Finally, modeling will be performed at both short and long timescales to build hierarchical temporal representations of underlying processes that jointly describe brain signals and human behavior. In addition, given that datasets for each patient are several terabytes in size, the algorithms we develop will extend the Laboratory's distributed computing libraries, augment existing software infrastructure, and build additional deep-learning capabilities for graphics-processor-based computational systems. Results from this project have the potential to yield new insights about basic and clinically relevant functions of the human brain, as well as about more practical aspects (e.g., information content) of these relatively new data sources.

Mission Relevance

Our research objective of developing analytic approaches for large-scale, multidimensional, biomedical big data directly supports the Laboratory's core competency in high-performance computing, simulation, and data science, as well as bioscience and bioengineering. This research supports DOE's goal to transform our understanding of nature and strengthen the connection between advances in fundamental science and technology innovation.

FY17 Accomplishments and Results

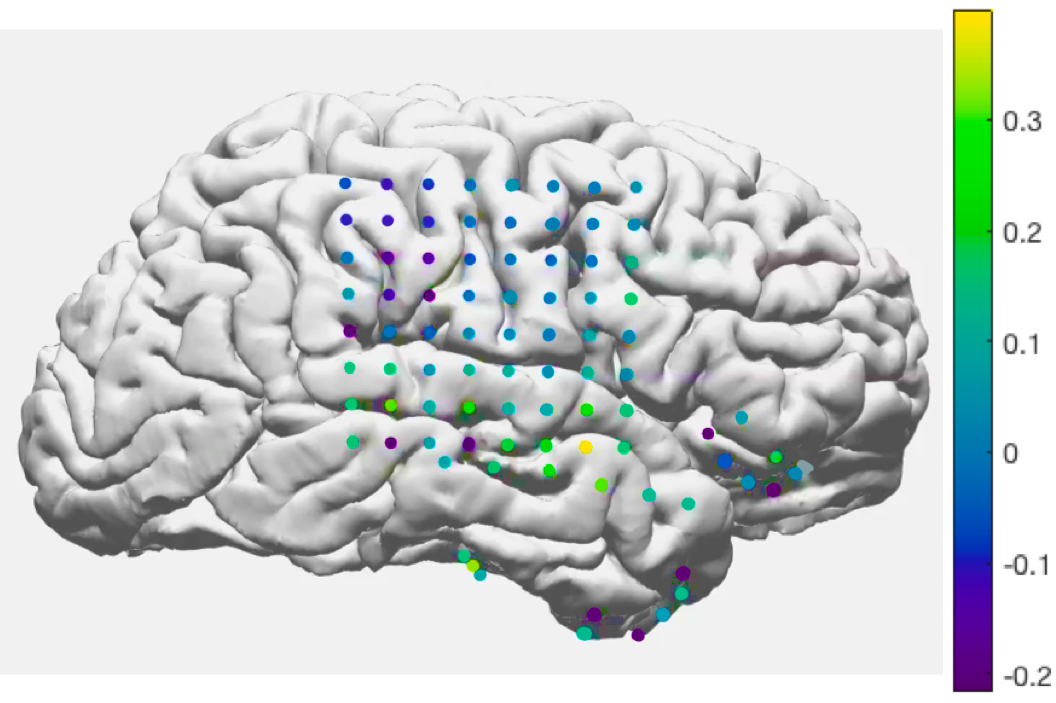

In FY17 we (1) received a portion of the human electrophysiological monitoring that uses electrodes to record electrical activity from the cerebral cortex (electrocorticography) from UCSF and applied our local field-potential models to these data; (2) developed classifiers to distinguish both high-level and low-level patient states, including emotional effects; (3) designed and tested fully automated methods to detect poor signal quality in the electrocorticography data set; (4) applied our image-based facial-expression recognition algorithm to a video data set and also included a face detector so that our approach will be better suited to the patient video data; and (5) implemented two approaches for detecting fine-grained action sequences from video and compared their performance to existing methods on a publicly available dataset.

Publications and Presentations

Kim, H. et al. 2017. "Fine-Grained Human Activity Recognition Using Deep Neural Networks." Poster presentation at Lawrence Livermore National Laboratory CASIS Workshop, 2017. LLNL-POST-731136.

Tran, E., et al. 2017. "Deciphering Emotions Using Convolutional Neural Networks on Video Data." Poster presentation at Lawrence Livermore National Laboratory CASIS Workshop, 2017. LLNL-POST-73142.